PPC (pay per click) is a key component of many online marketing campaigns. And while it can drive significant revenue, it’s also one of the most expensive ongoing costs in a campaign. Therefore, it’s key that you test your ads regularly, to make sure you aren’t letting any conversions slip through the cracks.

Testing and optimizing is an important part of our job as digital marketers. And I’m not just talking about perfecting your ad copy.

At SMX London earlier this year, I gave a talk on how we design and implement tests at Crealytics for both Text and Shopping ads.

Carrying on from that, this post will cover three methods you can use for successful testing, two types of testing to help you take performance to the next level, and five common pitfalls that testers often run into. I’ll also illustrate these points with examples from our own internal testing efforts.

Deciding which method to use

Designing a good experiment is actually the most important step in getting actionable results. Which testing method you use will depend on what data you have available and what variables you are trying to test. In general, there are three basic types of testing methods:

- Drafts and experiments

- Scheduled A/B tests

- Before/after tests

Each of these methods comes with pluses and minuses.

Drafts and experiments

Drafts and experiments are the most diverse testing tools. This two-pronged testing method lets you propose and test changes to your Search campaigns.

Drafts let you create a mirror image of your campaign and then change the element(s) you want to test. This lets you play around with what settings it’s possible to change without messing up your current campaigns. Once you’ve created your draft, you can turn it into an Experiment.

Experiments help you measure your results to understand the impact of your changes before you apply them to a campaign. Once you’ve completed your Draft setup, you convert it to an Experiment and choose a percentage of your traffic to run the test on, as well as a time frame.

Through this method, you can test almost anything within your campaign. You can test structural elements of your campaigns like Ads, landing pages or match types. You can also test the influence of bidding variables like bid amounts, modifiers (device, schedule, geo-targeting) and strategies (eCPC, Target CPA). Finally, this method allows you to test changes within features in your campaigns, such as Ad extensions or Audience lists.

Unfortunately, drafts and experiments are currently only available in Text ads.

Example: A/B test landing pages with drafts and experiments for conversion rate

In this example, we want to know which of two landing pages gets the most conversions.

To set it up, create a draft of your campaign, change the landing page URL and set it as an experiment. For the analysis, keep track of top line performance using the automatic scorecard displayed in the experiment campaign.

In this case, our new landing page didn’t perform as well as the old one.

Once the experiment has run its course, make sure to take a deep dive into the data to rule out any irregularities.

Manually-scheduled A/B tests

There are still some scenarios where you can use manually-scheduled A/B tests, in which the tests are run alternately instead of simultaneously. These are especially useful in the cases where drafts and experiments won’t work because it stops your campaigns from potentially cannibalizing each other.

This kind of testing works best for things like search terms where the query composition is important, i.e., match type changes and negative changes. It also allows you to test the structure, bidding and features of your Google Shopping campaigns.

Recommendation: Use this scheduling to avoid cannibalization while still being independent of seasonality

To use manual A/B tests, create a duplicate of your campaign, change an element and use the campaign settings to share hours justly between the two.

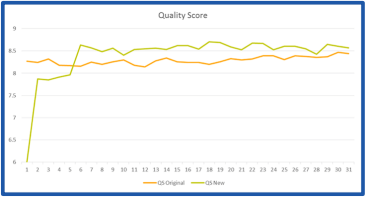

Example: How fast do quality scores pick up after campaign transition?

To set it up, duplicate the campaign and set a schedule to run it against the original campaign. For your analysis, compare traffic and Quality Score levels. In this example, we can see that Quality Scores pick up within a few days.

Before/after tests

Before/after tests are a versatile type of testing often used for feed components. In this type of testing, it’s important that you have a good control group; that way you will know how much of the performance uplift is due to seasonal or budget changes and how much is due to your experiment.

Before/after testing is best for things that are difficult or take a long time to change, such as product titles, images and prices. In these tests, you are measuring the relational change between your test and control groups. This is often the only way to test variables in Google Shopping.

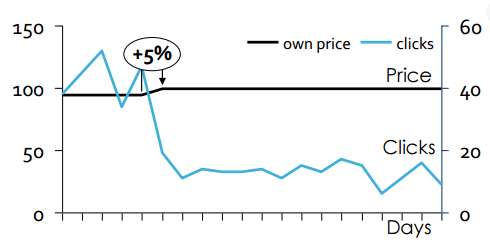

Example: Does Google reward cheaper product prices with more impressions?

Here we want to know if Google is more likely to show lower-priced products in Google Shopping. To set it up, choose a product and increase its price from lowest to highest among competitors. To analyze the results, compare traffic before and after the change, using your control group as a baseline.

In this case, a small 5 percent increase in price, had a huge negative effect on the number of clicks. You can read more about our theory on Google’s low-price bias here.

Google Merchant Center experiments

Right now, before/after tests are the only way for you to test how product information (title, image, description) affects Google Shopping performance. However, Google is beginning to test allowing for feed optimizations directly in the Merchant Center interface. These tests include Phase 1 and Phase 2 in comparison to the baseline.

However, the idea is still in beta, and there isn’t much evidence around whether or not it works. The biggest issue we’ve noticed with this method is that Google randomizes the products that can be included in both the test and the control group, meaning the suggestions aren’t based on the true uplift potential of the account.

For more on how we’ve tested Feed Titles in the past, check out this Search Engine Land post.

A/B testing tools

Calculating whether or not the results of your test were statistically significant can be tricky. Luckily, there are plenty of online A/B tools that can help. You upload your data and then run statistics tests on your success metrics, which can include clicks, conversions or impressions.

Optimizing current accounts and performance

There are two reasons you might conduct a test within your PPC campaign. The first is to optimize the parameters within the Google sandbox to get better Google KPIs. In this case, you are testing your ads to optimize performance directly. This is a necessary step to make sure your account is performing at optimum level.

The second is to help you understand what the black box does. In this case, you are testing your understanding of how Google works. Knowing how Google does what it does (or even exactly what it is doing) can help you inform and improve your strategy and may allow you to gain an early advantage over your competition.

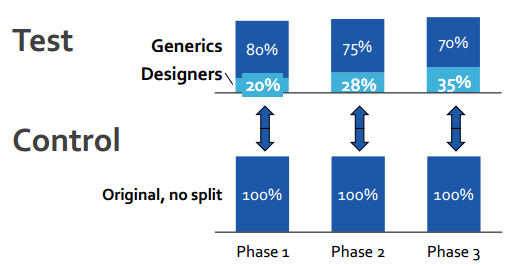

Optimization example: Shopping campaign segmentation

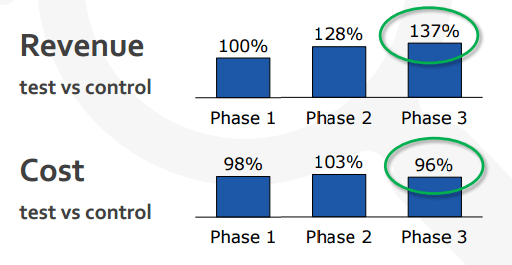

A few years ago, we theorized that splitting Shopping queries into generic and designer campaigns would save advertising costs while maintaining revenue. Using campaign priorities and negatives, we designed an AdWords structure that would force Google to split traffic into a generic or designer campaign based on the shopper’s query, for which we could then set different bids. We wanted to test whether this campaign structure would be more effective than the regular AdWords structure.

To test our new campaign structure, we used a rotating A/B test. We duplicated the products into a test campaign, applied the new structure and gave the designer campaign a higher bid. Then we rotated by scheduling.

Turns out we were right. Queries with higher conversion probability get more exposure, overcompensating for the higher CPC.

What we learned

- A/B testing campaign setups are possible.

- To keep results comparable, either keep cost or revenue stable.

- Don’t measure the uplift of the test campaign itself, only the overall change in relation to the control group, to eliminate outside influences like seasonality.

Black box example: Bidding on products is like ‘Broad Match’

Another big question we had when we started using Google Shopping was about how Google’s bidding algorithm worked. Google was pretty tight-lipped when it came to what was going on behind the scenes, but from our cursory observations, it seemed as though higher bids led to a larger share of lower-converting traffic.

To test our hypothesis, we increased bids on brand campaigns by 200 percent. As we expected, our impressions skyrocketed, while our conversions remained stable.

Our results indicated that after a certain amount, your traffic quality gets weaker as you increase your bid — just like in broads. Essentially, you’re just paying more for the same traffic, which makes overbidding in Shopping a real problem.

What we learned

- Pure before/after tests need multiple sibling tests to validate the results; we tested several brands with the same results.

- Look beyond your hypothesis for additional insights — same traffic at a higher CPC was surprising.

- Always segment out queries, device, top vs other, search partners and audience vs. non-audience.

For more on how we drew conclusions on Google’s bidding algorithm and how to structure your campaigns, read this article.

Common pitfalls

There are lots of things that can bias the results of your tests, making them unusable. Here are five pitfalls we’ve encountered and how to overcome them.

1. Statistical significance

You should only end a test when you have enough information for it to be statistically significant. If you only run a test for two weeks, you might think something has no effect, when really it just takes a while for the effect to kick in.

This is especially true when working with Google. Their algorithm needs time to learn and adjust to the changes you’ve made. Use the tools we talked about earlier to help you evaluate if your data has relevance.

2. Don’t aggregate

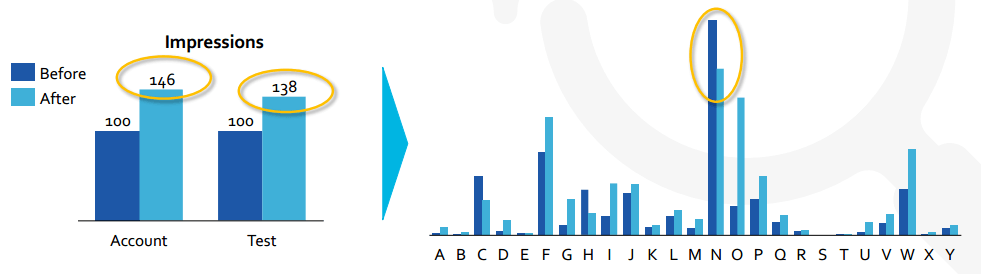

Don’t just analyze the totals of any one metric. Instead, you want to measure changes on the actual changed elements. In this example, if you look at the total aggregated data, it looks as though changing the title actually hurt impressions.

However, when we look at all the data individually, we can see that in every case except one, impressions increased by an average of 116 percent. In this case, one very large outlier completely skewed our aggregated data.

3. Think outside the box

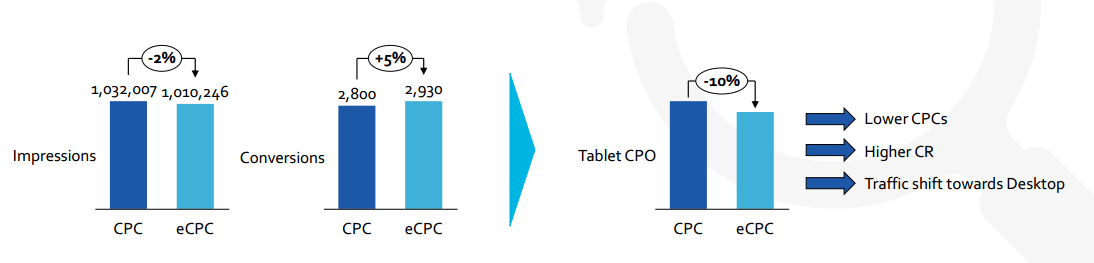

Regardless of what you ran your test to look for, you don’t have to limit your observations to the original changed variable. There are plenty more insights to be gained from other changes in your campaigns. For example, when we tested Enhanced CPC (eCPC), we noticed that it increased conversions by 5 percent. Then, upon further analysis, we noticed that eCPC helped lower the CPO of ads on tablet.

4. Know your surroundings

With any experiment, it’s important to think about what other factors may have influenced your results. The data alone doesn’t always tell the whole story.

For example, when we first looked at testing images, sometimes the change produced better results and sometimes it didn’t. Based solely on this data, we would have had to rule our results inconclusive.

But, just to be extra sure, we took a closer look at the testing environment. What we found was that in cases where changing the image made it stand out from all the other images, we saw uplift. However, if there was already a variety of image types on the page, changing the product image had no effect.

5. Look out for cannibalization

This is another form of understanding your surroundings when running a test. Sometimes a product’s increased performance means that it is diverting traffic away from some of your other products.

For example, when we increased the bids on one of our customer’s products, we saw a significant increase in impressions. However, it turned out when we looked at the total account performance, the product with the increased bid was cannibalizing other products by taking away the impressions they usually saw.

Based on that information, we were able to conclude that the actual incremental improvement was much lower than initially observed.

Takeaways

Testing is an essential part of any good PPC strategy because it allows you to gain a significant advantage and can lead you to some major campaign improvements.

However, you can’t just wade in and start changing things willy-nilly. Accurate testing requires a detail-oriented approach and a lot of planning.

Here are the four things you should have before attempting any significant tests:

- PPC experience. In order to derive smart hypotheses and come up with intelligent testing methods that take into account external factors, a significant amount of PPC experience is invaluable. Experience also helps when analyzing the data for insights, since you’ll have a good idea of what to look out for in terms of extra insights and variables that may affect your results.

- Loads of data. Some experience with data science or at least data warehousing will definitely be beneficial. Before you begin any tests, make sure you have a way to store, clean and analyze the data you collect.

- A knack for numbers. Liking data, numbers and analytics will make wading through all that data you collected a lot more pleasurable.

- The big picture. Data miners and scientists aren’t everything. You need to make sure someone in your testing team understands the bigger picture. This high-level thought process enables you to pull back and ask why something might be happening, which is often even more important than the observation that it is happening.

This article was adapted from a keynote talk I gave at SMX London. You can see the slides from that presentation here.

Contributing authors are invited to create content for Search Engine Land and are chosen for their expertise and contribution to the search community. Our contributors work under the oversight of the editorial staff and contributions are checked for quality and relevance to our readers. The opinions they express are their own.